7 Inference for numerical data: >2 samples

7.1 Introduction

In this chapter, we introduce the use of one-way analysis of variance (ANOVA) for hypothesis testing of more than two sample means with normal distributions. ANOVA was first introduced by the statistician R.A. Fisher in 1921 and is now widely applied in the biomedical and other research fields.

ANOVA is a single global test to determine whether the means differ in any groups. In addition, the term “one-way” is used because the participants are separated into groups by one factor (e.g., intervention).

7.2 The basic idea of ANOVA

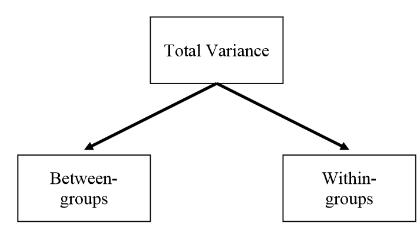

ANOVA separates the total variability in the data into that which can be attributed to differences between the individuals from different groups (between-group or explained variation), and to the random variation between the individuals within each group (within-group or unexplained variation). Then we can test whether the variability in the data comes mostly from the variability within each group or can truly be attributed to the variability between groups.

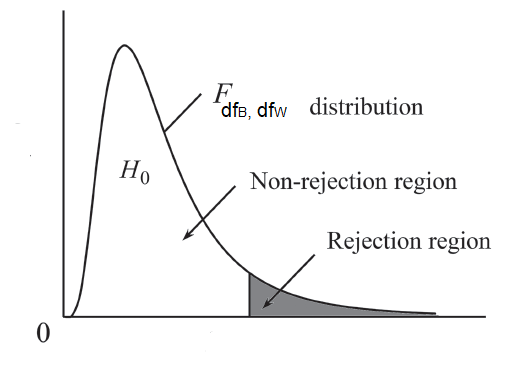

These components of variation are measured using variances, hence the name analysis of variance (ANOVA). Under the null hypothesis “the group means are the same”, the between-group variance will be similar to the within group variance. If, however, there are differences between the groups, then the between-group variance will be larger than the within-group variance. The F-ratio, which is the test statistic for ANOVA, is obtained by dividing between-group variability by within-group variability.

The calculation of the F-ratio involves to calculate the Sum of Squares, SS, the degrees of freedom, df, and finally the variances (Mean Squares, MS). Let’s see each of them more analytically: